How Bitcoin Core's Fee Estimator Works (And Why It Sometimes Doesn't)

Bitcoin Core’s fee estimator answers a simple question: “If I want my transaction confirmed within N blocks, what fee rate should I use?”

It’s called thousands of times per day through the estimatesmartfee RPC command. Wallets, services, and Lightning nodes all rely on it. But the answer it gives isn’t based on what’s happening in the mempool right now. It’s based on what happened in the past.

That design choice makes it resistant to manipulation but slow to react. When fees spike or crash, users overpay or miss confirmation targets. Here’s why that happens, how the algorithm actually works, and what’s coming to fix it.

The core problem: historical data only

Bitcoin Core’s CBlockPolicyEstimator is backward-looking by design. It tracks which transactions entered the mempool and how many blocks later they got confirmed. From that history, it builds a statistical model: “In the past, transactions paying X sat/vB confirmed within Y blocks 95% of the time.”

This works decently when fee markets are stable. But when conditions change rapidly, the estimator lags. By design.

Bitcoin Core Issue #27995 describes the fundamental limitation:

“Because it’s solely based on historical data (looking at how long mempool transactions take to confirm), it cannot react quickly to changing conditions.”

When fees suddenly spike during an ordinals flood or halving hype, the estimator doesn’t see those high-fee transactions until they confirm in a block. By the time it adjusts, users underpay and miss targets.

Conversely, when fees crash after congestion clears, the estimator still recommends high fees based on data from hours or days ago. Users overpay massively.

Real-world example: Block’s Augur benchmarking during the April 2024 halving showed Blockstream.info’s estimates recommended fees 224% higher than necessary to maintain low miss rates. That’s telling users to pay $50 when $15 would suffice.

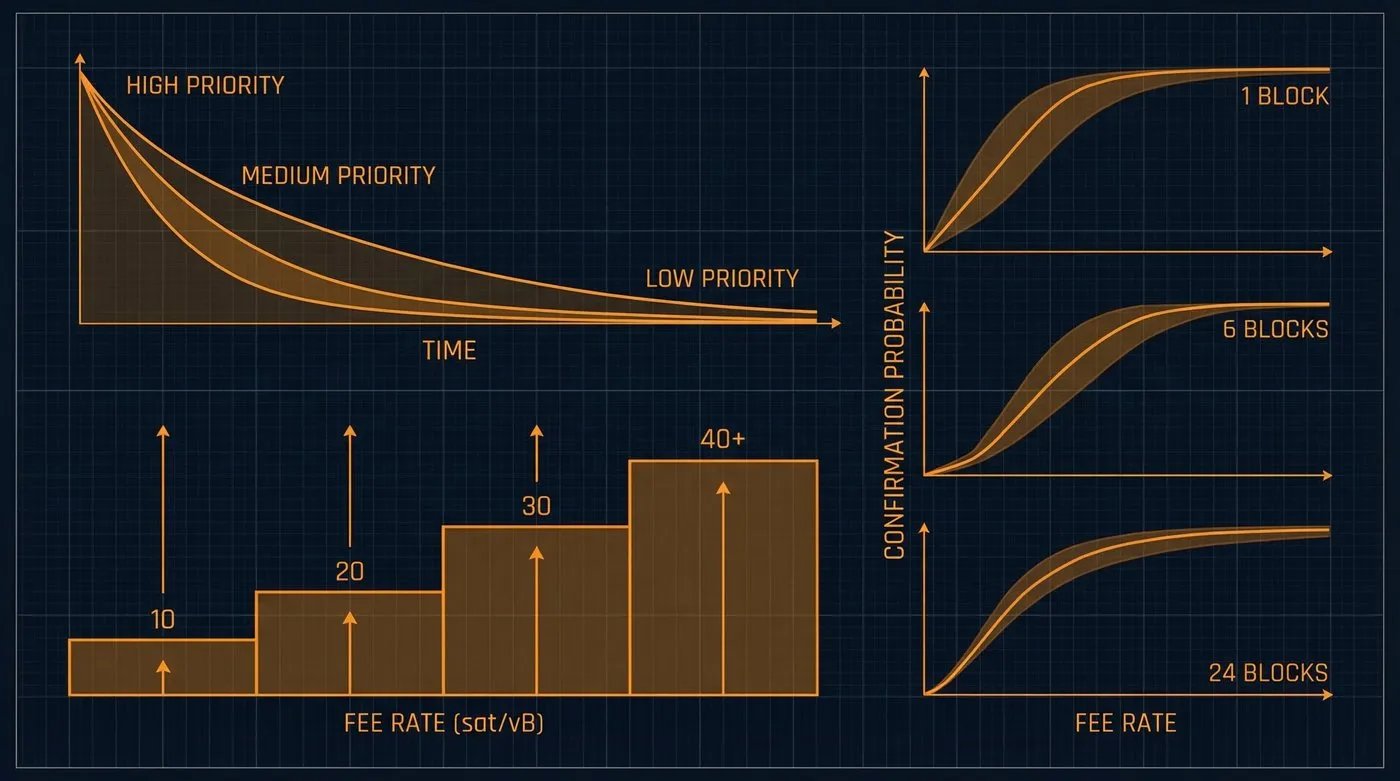

How it works: fee rate buckets and exponential decay

Grouping transactions into buckets

Transaction fee rates span an almost continuous range from 1 sat/vB to tens of thousands. Tracking every individual fee rate would be computationally expensive, so Bitcoin Core groups them into fee rate buckets.

According to Bitcoin Core PR Review Club #31664, these buckets are exponentially spaced by a factor of 1.05:

- Bucket 1: 1,000 to 1,050 sat/kvB

- Bucket 2: 1,050 to 1,102.5 sat/kvB

- Bucket 3: 1,102.5 to 1,157.6 sat/kvB

- …up to 10,000,000 sat/kvB

This exponential spacing allows the estimator to cover a huge dynamic range without needing hundreds of buckets.

Tracking confirmation history

For each bucket and confirmation target (e.g., “confirm within 6 blocks”), the estimator keeps two counters:

- Total transactions that entered the mempool in that bucket

- Transactions confirmed within the target number of blocks

Alex Morcos’s canonical algorithm description explains what happens when a transaction confirms:

“So if a block includes a specific transaction T, when you look up its mempool entry and see it entered the mempool 12 blocks ago, you then increment the counters for targets 12-25 for its fee rate bucket and increment the total tx count for that bucket.”

If a transaction is removed from the mempool without being confirmed (evicted, replaced, etc.), it’s counted as a failure.

Exponential decay for recency weighting

The estimator doesn’t treat all history equally. Older data is exponentially decayed every block so recent events carry more weight.

Bitcoin Core 0.15 introduced three time horizons with different decay rates:

| Horizon | Decay Rate | Half-Life | Targets Covered | Use Case |

|---|---|---|---|---|

| Short | 0.962 | 18 blocks (~3h) | Up to 12 blocks | Rapid market changes |

| Medium | 0.9952 | 144 blocks (1d) | Up to 48 blocks | Balanced estimates |

| Long | 0.99931 | 1,008 blocks (1w) | Up to 1,008 blocks | Conservative, slow-changing |

Each horizon maintains its own bucket counters. This allows the estimator to choose the shortest horizon that has enough data for the requested target.

Calculating the estimate

When estimatesmartfee is called with a target (e.g., 6 blocks), it:

- Selects the shortest horizon that covers that target

- Starts at the highest fee rate bucket

- Calculates:

confirmed_in_target / (total_confirmed + still_in_mempool_for_≥target) - Checks if this ratio meets the threshold (default: 95% for conservative, 85% for economical)

- If yes, moves to the next-lower bucket and repeats

- Returns the lowest bucket that still meets the threshold

John Newbery’s introduction to the algorithm describes this process:

“Keep working down the buckets until we find one where the probability of being confirmed is less than 95%. Take the previous bucket (the lowest value bucket that had a probability of at least 95% of being included) and return the median fee of all transactions in that bucket.”

Conservative vs. economical modes

As of Bitcoin Core 28.0, estimatesmartfee supports two modes:

- Conservative (formerly the default): Uses longer horizons, requires 95% threshold at 2× the target. Higher fees, slower to react to drops.

- Economical (new default as of 28.0): Uses shorter horizons, 85% threshold at the requested target. Lower fees, more responsive.

The switch to economical as the default was a direct response to widespread overestimation complaints. That single change probably saved users millions in aggregate overpayment.

Why it fails: common failure modes

Lag during rapid changes

This is the big one. Fee spikes or crashes aren’t reflected until transactions confirm.

When fees suddenly spike, the estimator doesn’t see high-fee transactions in the mempool. It only updates when they confirm in a block. By the time it adjusts, conditions may have changed again.

When fees crash, the estimator still recommends high fees based on historical data from hours or days ago.

Stale estimates after restart

If a node restarts, it loads fee estimates from disk, but that file might be hours or days old.

Bitcoin Core saves estimates to fee_estimates.dat periodically. Bitcoin Core Issue #27555 describes the problem:

“After conversation on irc it appears there’s some cases where Bitcoin core will serve feerate information even though it loaded a fairly old fee estimates file and is still syncing the chain. This is pretty dangerous for lightning nodes, at least on legacy channels or pre-package-relay.”

If network conditions changed while the node was offline, it will serve stale data until it accumulates fresh confirmation history.

Overpayment in low-fee environments

When the mempool is nearly empty (1 sat/vB transactions confirming), the estimator may still recommend much higher fees.

This happens because:

- The historical data includes periods when fees were higher

- The decay is slow (half-life of 144 blocks for medium horizon = 1 day)

- The estimator has no awareness of current mempool depth

Bitcoin Core Issue #27995 notes:

“Because it’s aiming to match seen behavior rather than requirement, if some non-negligible fraction of users keeps paying a certain high feerate, it may try to match that, even if unnecessary for confirmation.”

Users end up paying 5–10× the necessary fee even though blocks have spare capacity.

Not package-aware (CPFP blind spot)

The estimator only tracks individual transactions, not parent-child relationships.

If a low-fee parent is confirmed because a high-fee child paid for both (CPFP), the estimator records the parent as “confirmed at low fee in X blocks.” This skews estimates downward for that bucket, causing future transactions to underpay.

Bitcoin Core PR Review Club #31664 describes this limitation:

“Package unaware: Only considers individual transactions, ignoring parent-child relationships. As a result, it fails to account for CPFP’d transactions, which can lead to lower fee rate estimates, resulting in underpayment and missed confirmation targets.”

Multiple attempts to fix this (PR #23074, PR #25380, PR #30079) have stalled. The problem is harder than it looks.

Policy divergence risk

Your node’s mempool might not match miners’ mempools.

Reasons for divergence:

- Network topology: Poorly connected nodes don’t see all transactions

- Policy differences: Pre-Taproot nodes won’t see Taproot transactions; nodes with different relay policies see different mempools

- Out-of-band transactions: Miners may include transactions submitted directly (not relayed publicly)

If your mempool is missing high-fee transactions, your estimates will be too low. If it contains unconfirmable transactions due to looser local policy, estimates will be too high.

Design tradeoffs: why it’s built this way

Manipulation resistance

The estimator’s reliance on confirmed transactions is a deliberate security choice.

If it only looked at blocks (not mempool history), miners could trivially game it by stuffing blocks with high-fee private transactions. Those never hit the public mempool, so the miner doesn’t actually pay those fees to anyone else, just the opportunity cost of excluding real transactions.

By requiring transactions to be seen in the mempool first, the attack cost increases dramatically. A miner would have to broadcast high-fee transactions, risking another miner including them and collecting those fees.

“If we only looked at historic blocks, our fee estimation algorithm would be trivially gameable by miners. A miner could stuff his block with private, high fee rate transactions. […] If our fee estimator requires that transactions be seen in the mempool before they’re included in a block, then the cost increases dramatically and the attack becomes infeasible.”

Simplicity and transparency

The algorithm is deliberately not predictive. It doesn’t try to model future demand or supply. It simply reports: “In the past, this fee rate worked X% of the time for this target.”

This makes the logic easy to audit and reason about. There’s no opaque ML model or complex forecasting. The estimate is directly traceable to historical bucket counters.

“Bitcoin Core’s fee estimation doesn’t try to be too smart. It simply records and reports meaningful statistics about past events, and uses that data to give the user a reasonable estimate […] It doesn’t try to be forward-looking, and it’s easy to describe exactly why a given estimate has been produced based on past statistics.”

Why mempool-based estimation is being added

Bitcoin Core is actively working on a hybrid approach: keep BlockPolicyEstimator for manipulation resistance, but add a separate mempool-based forecaster that can react in real-time.

The forecaster architecture (in progress)

Issue #30392 outlines a new system:

- FeeRateForecasterManager coordinates multiple forecasters

- CBlockPolicyEstimator (existing) - historical data, manipulation-resistant

- MempoolForecaster (new) - generates block templates from current mempool, extracts 50th and 75th percentile fee rates

The manager can blend estimates or use mempool data as a sanity check to lower overly high historical estimates.

Bitcoin Core PR #31664 describes the MempoolForecaster:

“MempoolForecaster class: Inherits from Forecaster and Generates block templates and extracts the 50th and 75th percentile fee rates to produce high and low priority fee rate estimates”

“Performance optimization: Implements a 30-second caching mechanism to prevent excessive template generation and mitigate potential DoS vectors”

Safeguards against mempool divergence

To prevent mempool-based estimates from being wildly wrong, the proposed design includes:

- Confirmation rate checks: Track what percentage of high-fee mempool transactions actually confirm. If too many don’t, disable mempool estimates.

- Staleness counters: Track how often a transaction “should have” been mined but wasn’t. Exclude it from calculations if the counter gets too high.

Pieter Wuille’s proposal in Issue #27995:

“We can look at what percentage of high-feerate mempool transactions confirm within a reasonable period of time. If that percentage is high, it’s unlikely we have a significantly diverging policy from the network’s aggregate policy. If it is too low, we may want to disable mempool-based fee estimation entirely.”

Real-world benchmarking: mempool wins on responsiveness

Block (formerly Square) open-sourced Augur, a mempool-based fee estimator, with extensive benchmarking against Bitcoin Core and other providers.

Results (May 2025, 6-block target, 80% confidence):

| Provider | Miss Rate | Avg Overpayment | Total Difference |

|---|---|---|---|

| Augur (mempool) | 14.1% | 15.9% | 13.6% |

| WhatTheFee | 14.0% | 18.7% | 16.1% |

| Mempool.space | 24.4% | 21.7% | 16.4% |

| Bitcoiner.Live | 3.6% | 65.5% | 63.2% |

| Blockstream | 18.7% | 44.2% | 35.9% |

Augur achieved the best balance: low miss rate and low overpayment. Bitcoiner.Live’s 3.6% miss rate came at the cost of 65% average overpayment. Users paid nearly double unnecessarily.

Block’s conclusion:

“Augur delivers reliable confirmations with 5x lower costs than Bitcoiner.live while achieving a substantially lower miss rate than Blockstream.”

Why not just use mempool data in Bitcoin Core?

The mempool forecaster won’t be the default for automated RPC calls (like wallet sendtoaddress) until the safeguards are proven. The risk of policy divergence is too high.

But it will be available as a selectable mode for users who:

- Understand the tradeoffs

- Run well-connected nodes

- Want more responsive estimates (e.g., Lightning node operators)

“Even with these safeguards, I don’t think it’s reasonable to use as a default feerate estimate (at least not for automatic feerate decisions without human intervention, like sendtoaddress and friends), but it could be a separately selectable estimation mode.”

What services are doing today

Many Bitcoin businesses have already switched away from Bitcoin Core’s fee estimator:

- BTCPay Server: Switched away from Bitcoin Core estimates

- Decred DEX: Still sees overestimates even with economical mode

- Strike: Built a blended estimator combining Bitcoin Core and mempool data

This fracturing undermines Bitcoin’s trustlessness. Users running their own nodes should be able to rely on their own estimates, not third-party APIs.

The path forward

Bitcoin Core’s fee estimator is a cautious, manipulation-resistant design. It works reliably in stable conditions but lags during volatility. The upcoming mempool forecaster will add responsiveness while preserving the existing estimator’s security guarantees.

The key insight: you need both. Historical data for manipulation resistance, mempool data for real-time awareness, and safeguards to detect when the two diverge.

For now, users can:

- Use economical mode (default in 28.0+) for lower fees

- Understand that estimates lag during rapid changes

- Consider external estimators (mempool.space, Augur) for time-sensitive transactions

- Enable RBF to adjust fees if initial estimates miss

For the future: watch Issue #30392 and PR #31664. When mempool forecasting lands, Bitcoin Core will finally have the best of both worlds.

Sources

Bitcoin Core Issue #27995, Bitcoin Core Fee Estimation Algorithm (Alex Morcos), An Introduction to Bitcoin Core Fee Estimation (John Newbery), Bitcoin Core PR Review Club #31664, Bitcoin Core Issue #30392, Bitcoin Core PR #30275, Augur: An Open Source Bitcoin Fee Estimation Library (Block Engineering), Bitcoin Core Issue #27555. Data/status as of March 15, 2026.