Bitcoin Core's ChaCha20 Speedup: Why 3x Faster Encryption Matters for P2P Security

Bitcoin Core developer Cory Fields has proposed a vectorized implementation of the ChaCha20 cipher that achieves 2x speedup on x86-64 and 3x on ARM architectures. That matters because ChaCha20 is the encryption workhorse behind BIP324 v2 P2P encrypted transport, the protocol keeping Bitcoin node traffic private from eavesdroppers.

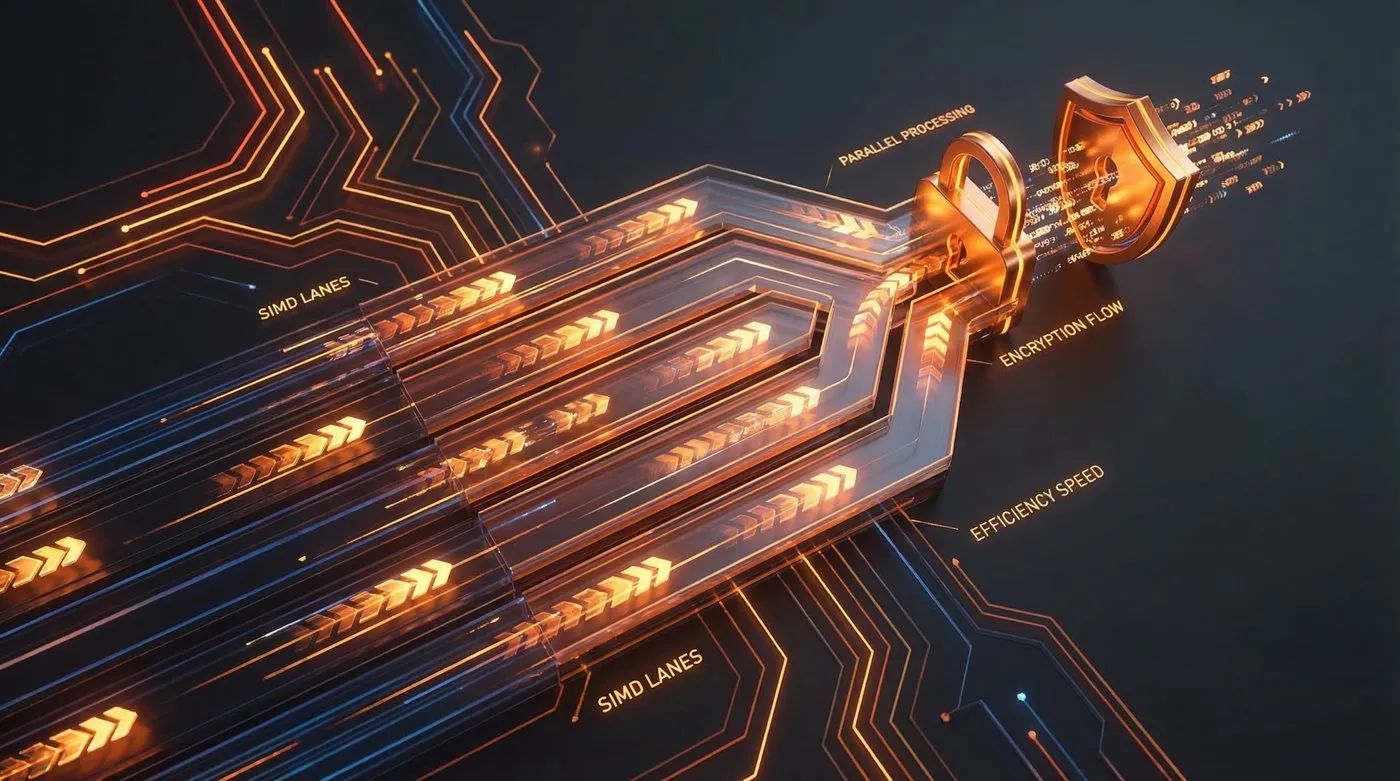

The optimization uses SIMD (Single Instruction, Multiple Data) to process multiple encryption blocks in parallel. Think of it like processing multiple pieces of data simultaneously instead of one at a time. The result: lower CPU usage for encrypted connections, better headroom for low-power nodes, and encryption that’s actually cheaper than the old unencrypted protocol’s checksums.

Why ChaCha20?

ChaCha20 is a stream cipher designed by Daniel J. Bernstein. It’s an ARX cipher, meaning it builds security from simple operations: addition, rotation, and XOR. No complex lookup tables, no special hardware requirements.

BIP324 chose ChaCha20Poly1305 (ChaCha20 for encryption plus Poly1305 for authentication) for three reasons:

Performance. Optimized ChaCha20 is faster than unencrypted checksums in the old v1 protocol, which uses double-SHA256.

Security. No known practical attacks. It’s the same cipher used in TLS, WireGuard, and Signal.

Simplicity. Runs efficiently on any CPU without hardware acceleration.

BIP324 was first introduced in Bitcoin Core 26.0 (December 2023) as an experimental feature. As of Bitcoin Core 29.0, v2 transport is negotiated automatically with peers that support it.

The bottleneck

According to Fields’ PR description, local profiling revealed that “chacha20 accounts for a substantial amount of the network thread’s time.”

That tracks. BIP324 uses ChaCha20 twice per packet:

- FSChaCha20 for length field encryption (3 bytes per packet)

- FSChaCha20Poly1305 for packet content encryption and authentication

Both ciphers perform rekeying every 16,777,216 packets for forward secrecy. That’s a lot of ChaCha20 rounds in a busy node.

While it’s unclear whether speeding up ChaCha20 will directly improve network latency (packet arrival is usually the limiting factor), it definitely makes encryption more efficient. Less CPU load means nodes can handle more connections or run on lower-power hardware.

How SIMD vectorization works

SIMD lets CPUs process multiple pieces of data in parallel using wide registers. Modern CPUs have:

- x86-64: 128-bit (SSE), 256-bit (AVX2), 512-bit (AVX-512) registers

- ARM NEON: 128-bit registers

Instead of processing one 32-bit word at a time, SIMD can process 4, 8, or even 16 words simultaneously.

ChaCha20 operates on a 4×4 matrix of 32-bit words (64 bytes total). The core operation is the quarter-round, which mixes four words using addition, rotation, and XOR. The full block function applies 20 rounds to the matrix, then XORs the output with plaintext to produce ciphertext.

Key insight: ChaCha20 processes independent blocks of data. If you need to encrypt multiple blocks, you can process them in parallel.

PR #34083 processes 2, 4, 6, 8, or 16 ChaCha20 states simultaneously depending on input size and CPU architecture. The implementation uses compiler built-ins (gcc/clang intrinsics) rather than hand-written assembly, allowing the compiler to generate optimized machine code for each architecture.

Fields notes: “In practice (at least on x86_64 and armv8), the compilers are able to produce assembly that’s not much worse than hand-written.” This means every architecture can benefit from vectorization without manual tuning for each CPU variant.

Batched length encryption

BIP324 uses a separate FSChaCha20 cipher for encrypting the 3-byte length field of each packet. Normally, you’d need a fresh 64-byte keystream for every 3 bytes of ciphertext. Wasteful.

The implementation batches length encryption: one ChaCha20 round generates 64 bytes, used across multiple packets. This avoids “wasting 61 pseudorandom bytes per packet” and makes the length cipher nearly free.

What this means for node operators

Short answer: Lower CPU usage for encrypted connections.

Long answer:

- Current v2 adopters (running Bitcoin Core ≥26.0 with

-v2transport) will see reduced encryption overhead once this PR merges - Low-power nodes (Raspberry Pi, old laptops) benefit most, as encryption becomes less of a bottleneck

- High-traffic nodes (block explorers, large exchanges) can handle more connections without CPU saturation

BIP324’s performance section notes that roughly the same computation power is required for encrypting and authenticating a v2 message as for the v1 double-SHA256 checksum. The vectorized implementation makes v2 encryption cheaper than v1 checksums, removing any performance penalty for privacy.

Technical implementation details

Fields spent significant effort coaxing gcc and clang into generating optimal code. Key tricks:

Force inlining. All helpers are marked ALWAYS_INLINE to avoid register clobbering.

Loop unrolling. Uses #pragma GCC unroll n and recursive inline template loops.

Avoid lambdas. Prevents compiler optimization misses.

Pass by reference. Avoids ABI changes when returning vectors.

The PR defines which parallelism levels to enable per architecture:

x86-64 (initial implementation):

- Disables 16-state and 8-state (not yet profitable)

- Enables 6-state, 4-state, 2-state

ARM NEON:

- Enables all multi-state levels (2/4/6/8/16)

Future PRs will add AVX2 and AVX-512 support, which can process even wider vectors. Platforms requiring runtime detection will come as a follow-up.

Forward secrecy and rekeying

BIP324 uses forward-secure rekeying to limit damage from key compromise. Every 16,777,216 packets, the implementation:

- Generates 32 bytes of keystream with a special nonce

- Uses those 32 bytes as the new key

- Resets the packet counter to 0

This means an attacker who steals the current key can only decrypt roughly 16 million recent packets, not the entire session history.

What’s next

This is part 1 of a series of PRs for ChaCha20. According to Fields, it makes sense to review the generic implementation and tune architecture-specific settings before adding runtime-dependent platforms.

Upcoming work:

- AVX2 support (256-bit vectors for newer Intel/AMD CPUs)

- AVX-512 support (512-bit vectors for high-end Intel CPUs)

- Runtime CPU detection (choose optimal implementation at runtime)

Once encryption is faster than plaintext with vectorization, there’s no reason not to use it. This could push the network toward 100% encrypted P2P traffic within a few years.

Bitcoin Core PR #34083, BIP324 Specification, Bitcoin Core 26.0 Release, RFC 8439 (ChaCha20), Bitcoin Optech: v2 P2P Transport. Data current as of March 16, 2026.